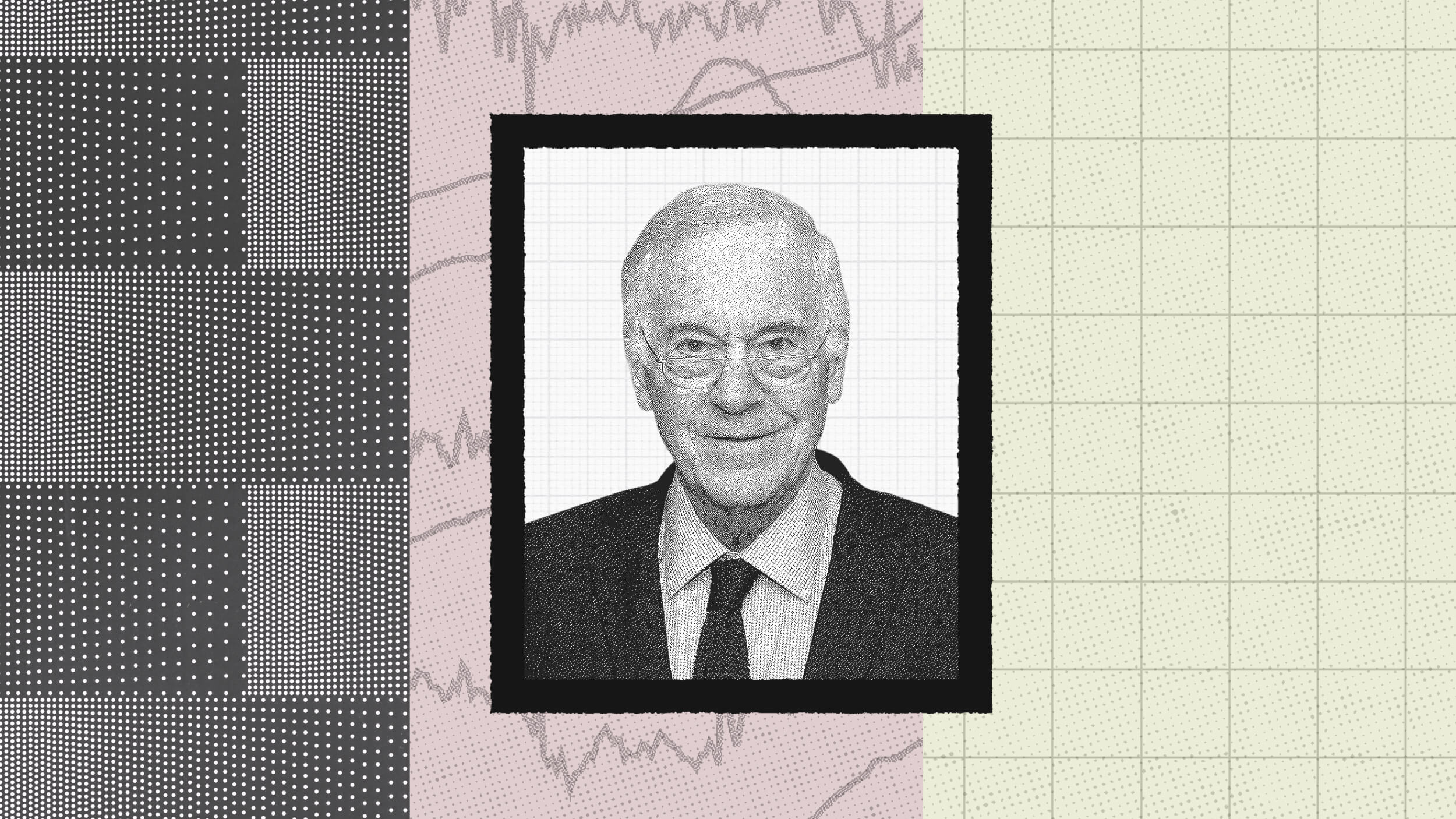

The results are in from the first study of what encourages and deters people from bullshitting

“Our country doesn’t do many things well, but when it comes to big occasions, no one else comes close,” so claimed an instructor I heard at the gym this week. He might be an expert in physical fitness but it’s doubtful this chap was drawing on any evidence or established knowledge about the UK’s standing on the international league table of pageantry or anything else, and what’s more, he probably didn’t care about his oversight. What he probably did feel is a social pressure to have an opinion on the royal wedding that took place last weekend. To borrow the terminology of US psychologist John Petrocelli, he was probably bullshitting.

“In essence,” Petrocelli explains in his new paper in the Journal of Experimental Social Psychology, “the bullshitter is a relatively careless thinker/communicator and plays fast and loose with ideas and/or information as he bypasses consideration of, or concern for, evidence and established knowledge.”

While pontificating on Britain’s prowess at pomp is pretty harmless, Petrocelli has more serious topics in mind. “Whether they be claims or expressions of opinions about the effects of vaccinations, the causes of success and failure, or political ideation, doing so with little to no concern for evidence or truth is wrong,” he writes.

There are countless psychology studies into lying (which is different from bullshitting because it involves deliberately concealing the truth) and an increasing number into fake news (again, unlike BS, deliberate manipulation is part of it). However, there are virtually none on bullshitting. Now Petrocelli has made a start, identifying several social factors that encourage or deter the practice.

The research began with nearly 600 people on Amazon’s Mechanical Turk survey website reading that a man named Jim had pulled out of running for a seat on the City Council. Participants thought they were taking part in a study of how we ascribe causes to others’ behaviour (the term bullshitting did not appear anywhere in the experiment instructions), and after they read about Jim’s resignation, they were invited to list five possible reasons and any related thoughts on why Jim might have done this – a perfect opportunity for bullshitters to let rip!

Petrocelli varied the precise conditions to see how this affected people’s propensity to bullshit when answering. For starters, he manipulated background knowledge by earlier on giving half the participants 13 facts about Jim, such as that he liked to be admired. Petrocelli also manipulated the social pressure to give an opinion, telling half the participants they didn’t have to list any reasons if they didn’t want to. Finally, Petrocelli manipulated audience knowledge, telling half the participants that their reasons would be scored by judges who knew Jim extremely well.

To measure bullshitting, Petrocelli later asked the participants to score their own reasons, based on how much they had been concerned with genuine evidence and established knowledge; essentially they assessed their own BS levels.

All the factors that Petrocelli manipulated made a difference. Overall, the participants who received no background information on Jim admitted to engaging in more bullshitting. Participants also bullshitted more when they felt more obliged to give an opinion, and when their audience was not knowledgeable about him. These latter two factors (obligation and audience knowledge) interacted, with social obligation being more potent. When feeling obligated to have an opinion, uninformed participants bullshitted a lot even when they knew their audience knew more than they did.

“Anything that an audience may do to enhance the social expectation that one should have or provide an opinion appears to increase the likelihood of the audience receiving bullshit,” Petrocelli said.

Without such pressure, however, the risk of being caught out was a deterrent to BS and Petrocelli further explored what he calls the “ease of passing bullshit hypothesis” in a follow-up experiment. Online participants were invited to justify their attitudes on hot-button social issues: affirmative action; nuclear weapons; and capital punishment. Crucially, Petrocelli manipulated who participants thought would be reading their justifications – either he gave participants no information about their audience or he told them a sociology professor with expertise on these issues would be reading their views (and further, that the prof either agreed with their positions; disagreed; or his own position was concealed).

The participants subsequently rated their own BS levels (i.e. they rated whether they’d been concerned with evidence or established knowledge) and it was the participants not told about a professor, or who thought the professor agreed with them, who especially admitted to more bullshitting. Those participants who knew a professor with opposing views was going to read their arguments admitted to the least bullshitting. Fear of being called out, in other words, appears to be a strong deterrent to spewing BS.

Where does this leave us? It’s a shame there was no objective marker of bullshitting in this research – sure, it makes sense to ask people if they considered any evidence or knowledge, but at least some kind of third-party assessment would have been useful. You might also have your doubts about the realism of these online experiments. Giving inconsequential opinions on a survey website is rather removed from the real-life scourge of the office colleague who is forever sharing their questionable wisdom on what you need to do to stay healthy or how the country should be run. Nonetheless, all empirical investigations must start somewhere and Petrocelli said his studies “provide a great deal of information relevant to the social psychology of bullshitting.”

The professor is under no illusions though about how hard it will be to translate his insights into practical anti-BS strategies. While his findings suggest that calling out BS (or the mere threat that it might be called out) can reduce the propensity for bullshitting to take place, Petrocelli notes that doing so “may not necessarily enhance evidence-based communication” and that instead, it may just be a “conversation killer”. He concludes that “future research will do well to respond to such questions empirically and determine effective ways of enhancing the concern for evidence and truth.”

Christian Jarrett (@Psych_Writer) is the editor of BPS Research Digest

This article was originally published on BPS Research Digest. Read the original article.