50 million Facebook users’ private information compromised by Cambridge Analytica, Trump’s digital wing

The moral of the story, it should be said upfront, is be very careful who you share your information with.

Strategic Communications Laboratories (or SCL, if you’re into the whole brevity thing) has been banned from Facebook for violating their terms of service. This, in and of itself, might not appear to have much significance. But Cambridge Analytica, the SCL-owned political data analysis firm, had digital psychographic profiles of 50 million Americans and were using those profiles to allow SCL to effectively — and accurately — predict the political weather.

How SCL managed to achieve this reads like the opening pages of a dystopian novel. In 2014, 270,000 users installed an app built by a man called Aleksandr Kogan called ‘thisisyourdigitallife’ which ostensibly was a personality quiz based on your Facebook data. It’s one of hundreds of thousands of Facebook apps that uses your Facebook data — which includes your location, every ‘like’, and also including every click you’ve ever made on the site and often others. However, thisisyourdigitallife also created a snapshot of not just the user’s immediate social network but also of their friend’s who had not adequately set their privacy settings. So, while thisisyourdigitallife was only installed by a quarter-million people, it captured the data of approximately 50,000,000.

While Facebook is trying to spin that it didn’t have much to do with this (as, in their words, users “knowingly signed up”), it’s the biggest personal data breach in the company’s history. Facebook alleges that only 270,000 people downloaded the thisisyourdigitallife app, a loophole allowed for 50,000,000 psychographic profiles to be built. As per WIRED:

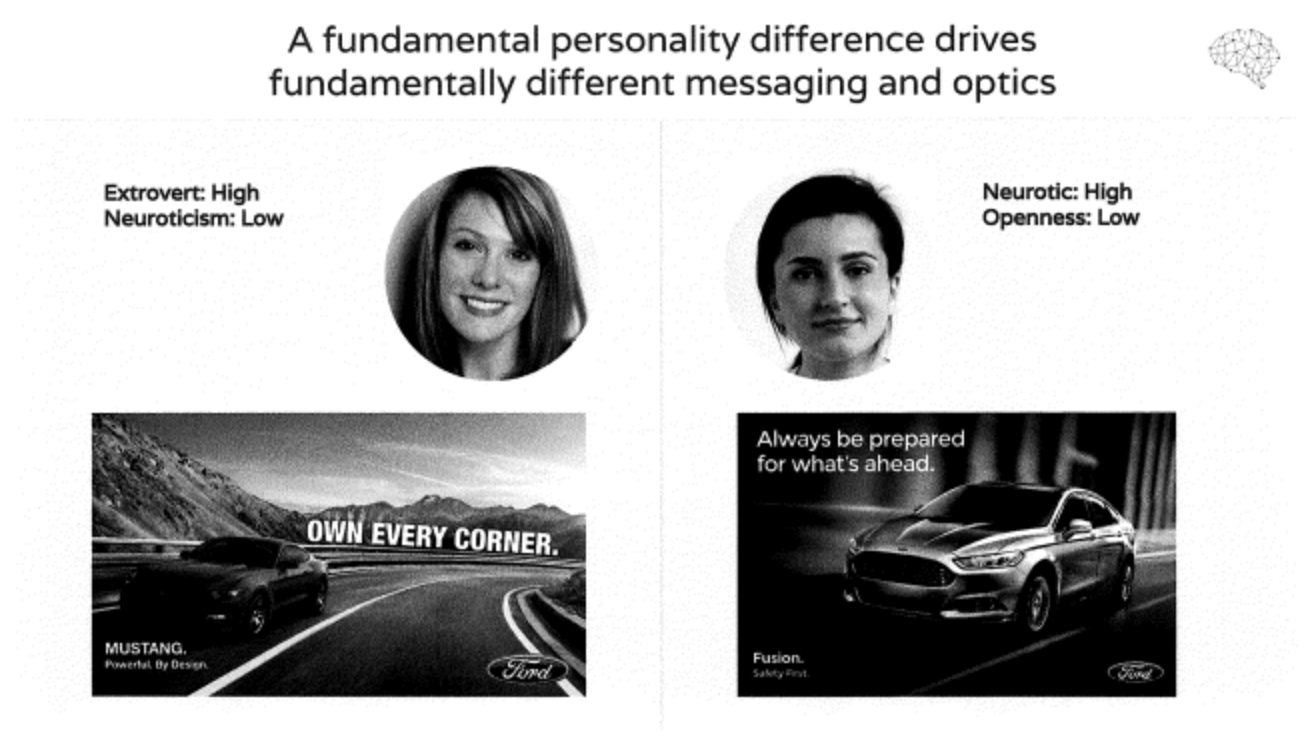

Though Facebook says just 270,000 people downloaded the app, a loophole at the time apparently allowed Kogan to collect vastly more information. Until 2014, apps could also collect information on every users’ entire friend network. Facebook shut down that capability for app developers in mid-2014, but offered some apps that were already up and running a small grace period before cutting them off. That timing roughly lines up with Kogan’s research. Of the 50 million accounts Kogan had data on, the New York Times and Guardian reports say, 30 million had complete enough profiles that Cambridge could create psychographic profiles of them. Different than demographic profiles, these describe people based on their personality types.

As per Facebook’s terms and conditions concerning data collection, Aleksandr Kogan was supposed to delete the data he had accumulated. But instead, Kogan sold the data to SCL. SCL just happens to have a data analytics wing called Cambridge Analytica, turned the data into psychographic profiles of 50 million Americans. Emboldened by this incredible tool, the Trump campaign used these psychographic profiles to great effect in 2016, more or less winning Donald Trump the election by giving him the louder message. Cambridge Analytica once featured Steve Bannon on its board and is also owned in part by Robert Mercer, a reclusive libertarian billionaire who spent millions on Trump’s campaign.

Still curious as to what this has to do with you?

At Big Think, we regularly A/B test social media headlines to figure out what the best message is for any given article. Two takes on the same article are pushed to two groups, and we find out within half an hour or so which was the more effective. At best, this allows a nice bump in Facebook traffic as the more effective the online package is to be clicked upon, the better traffic we get. Inevitably, if you have 2 billion Facebook profiles, the louder the message is, the more it gets heard. Cambridge Analytica was — in effect — A/B testing the entire country and honing in on the most effective message to appeal to its audience.

(For instance, would a headline including the words “Hilary Clinton” and “Benghazi” be more effective than “Hillary Clinton” and “emails”? Which headline would get more outrage, and thus more engagement?)

Libertarians might cry foul: because, so what? Cambridge Analytica created an effective data mining machine. But just because you can do something doesn’t mean that you should. This breach of user data raises not just a moral problem, but presents an entirely new moral spectrum: if a company has created an online version of ‘you’ and is using it against your knowledge, is there anything you can do? How much of digital you do you own?