In Quantum Physics, Even Humans Act As Waves

Quantum physics just keeps getting weirder, even as it gets more fascinating.

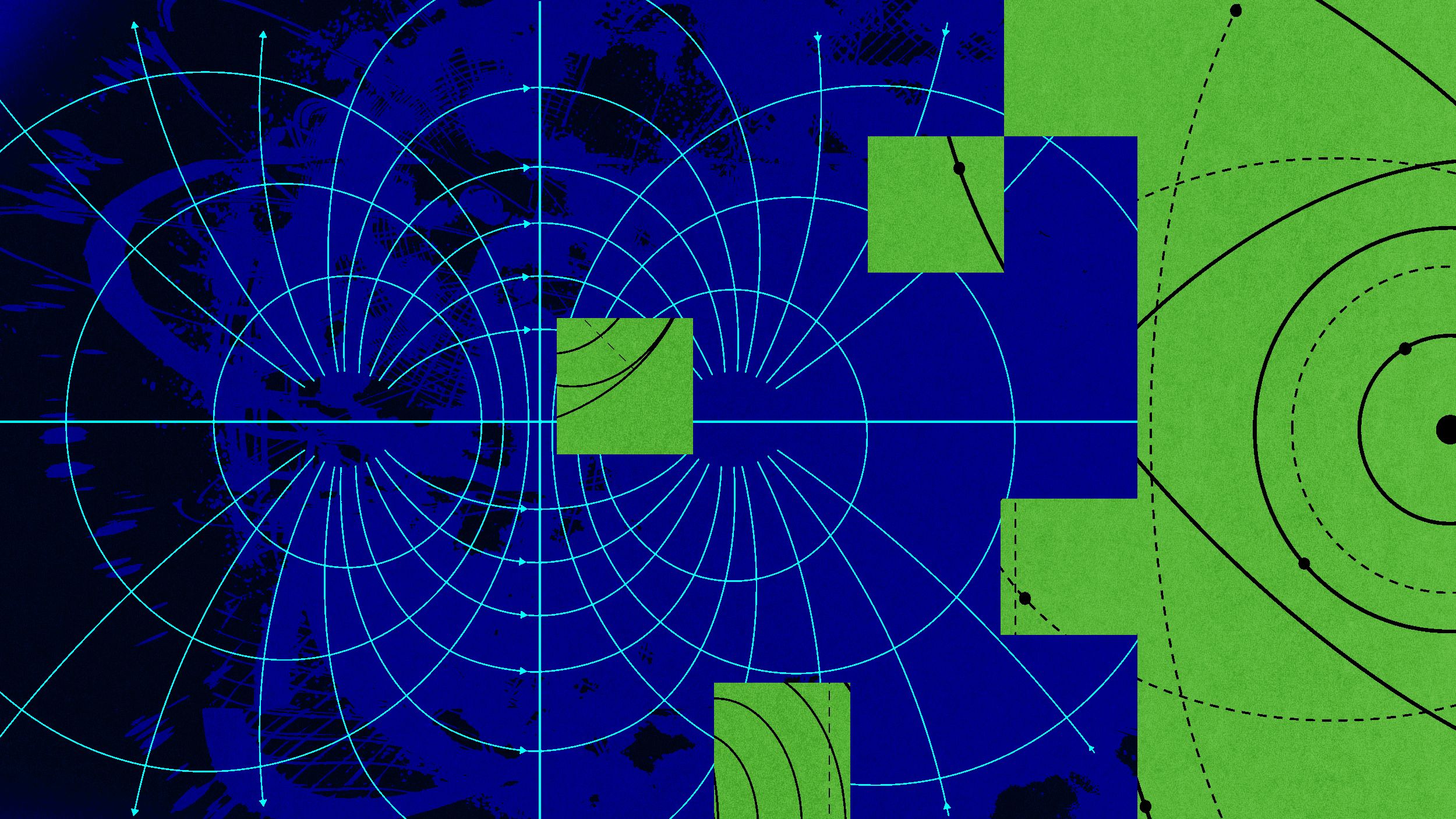

“Is it a wave or is it a particle?” Never has such a simple question had such a complicated answer as in the quantum realm. The answer, perhaps frighteningly, depends on how you ask the question. Pass a beam of light through two slits, and it acts like a wave. Fire that same beam of light into a conducting plate of metal, and it acts like a particle. Under appropriate conditions, we can measure either wave-like or particle-like behavior for photons — the fundamental quantum of light — confirming the dual, and very weird, nature of reality.

This dual nature of reality isn’t just restricted to light, either, but has been observed to apply to all quantum particles: electrons, protons, neutrons, even significantly large collections of atoms. In fact, if we can define it, we can quantify just how “wave-like” a particle or set of particles is. Even an entire human being, under the right conditions, can act like a quantum wave. (Although, good luck with measuring that.) Here’s the science behind what that all means.

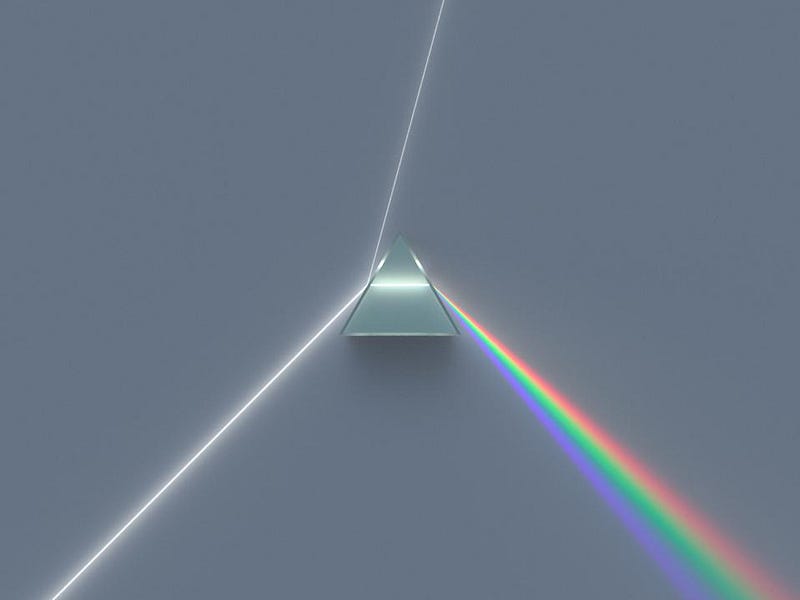

The debate over whether light behaves as a wave or a particle goes all the way back to the 17th century, when two titanic figures in physics history took opposite sides on the issue. On the one hand, Isaac Newton put forth a “corpuscular” theory of light, where it behaved the same way that particles did: moving in straight lines (rays) and refracting, reflecting, and carrying momentum just as any other kind of material would. Newton was able to predict many phenomena this way, and could explain how white light was composed of many other colors.

On the other hand, Christiaan Huygens favored the wave theory of light, noting features like interference and diffraction, which are inherently wave-like. Huygens’ work on waves couldn’t explain some of the phenomena that Newton’s corpuscular theory could, and vice versa. Things started to get more interesting in the early 1800s, however, as novel experiments began to truly reveal the ways in which light was intrinsically wave-like.

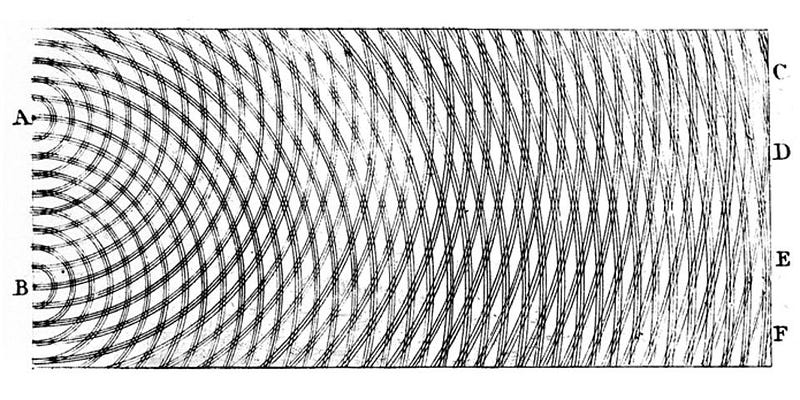

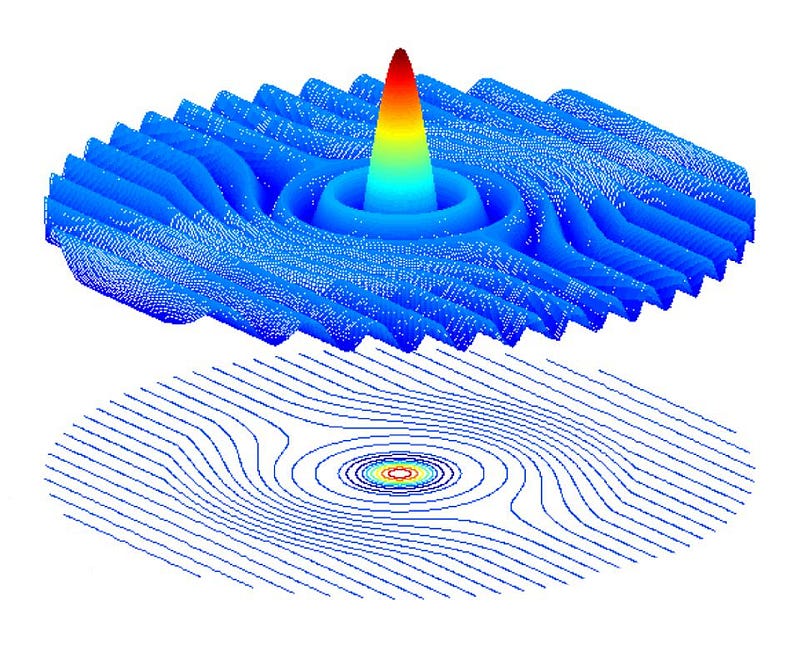

If you take a tank filled with water and create waves in it, and then set up a barrier with two “slits” that allow the waves on one side to pass through to the other, you’ll notice that the ripples interfere with one another. At some locations, the ripples will add up, creating larger magnitude ripples than a single wave alone would permit. At other locations, the ripples cancel one another out, leaving the water perfectly flat even as the ripples go by. This combination of an interference pattern — with alternating regions of constructive (additive) and destructive (subtractive) interference — is a hallmark of wave behavior.

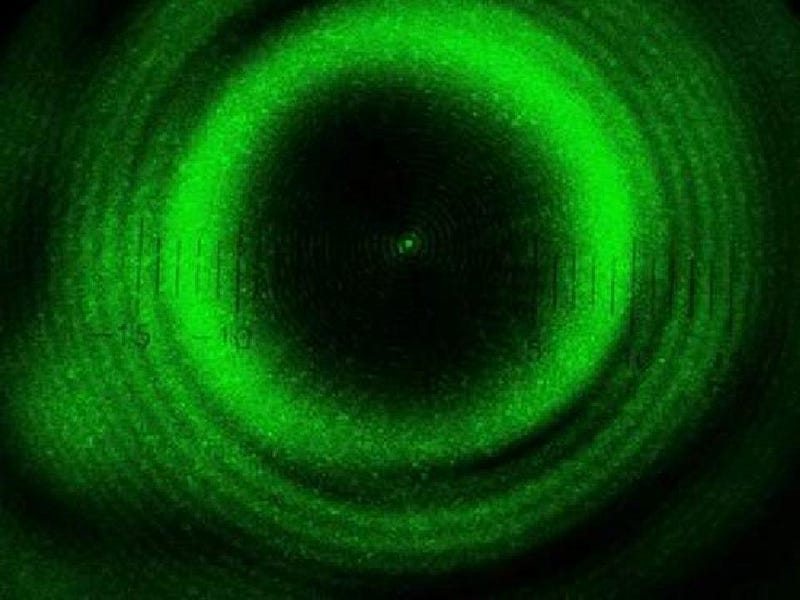

That same wave-like pattern shows up for light, as first noted by Thomas Young in a series of experiments performed over 200 years ago. In subsequent years, scientists began to uncover some of the more counterintuitive wave properties of light, such as an experiment where monochromatic light shines around a sphere, creating not only a wave-like pattern on the outside of the sphere, but a central peak in the middle of the shadow as well.

Later in the 1800s, Maxwell’s theory of electromagnetism allowed us to derive a form of charge-free radiation: an electromagnetic wave that travels at the speed of light. At last, the light wave had a mathematical footing where it was simply a consequence of electricity and magnetism, an inevitable result of a self-consistent theory. It was by thinking about these very light waves that Einstein was able to devise and establish the special theory of relativity. The wave nature of light was a fundamental reality of the Universe.

But it wasn’t a universal one. Light also behaves as a quantum particle in a number of important ways.

- Its energy is quantized into individual packets called photons, where each photon contains a specific amount of energy.

- Photons above a certain energy can ionize electrons off of atoms; photons below that energy, no matter what the intensity of that light is, cannot.

- And that it’s possible to create and send individual photons, one-at-a-time, through any experimental apparatus we can devise.

Those developments and realizations, when synthesized together, led to arguably the most mind-bending demonstration of quantum “weirdness” of all.

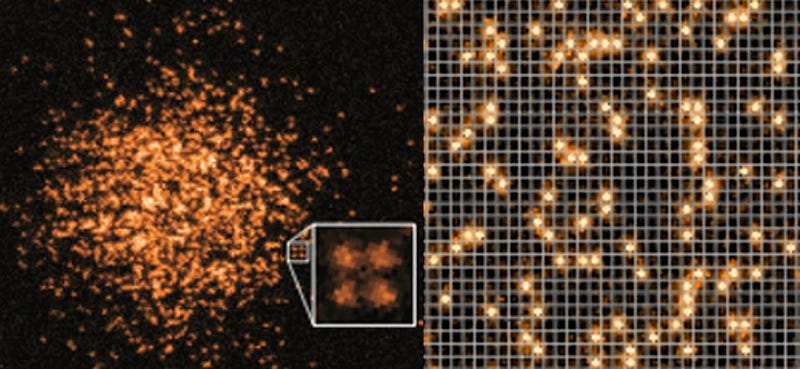

If you take a photon and fire it at a barrier that has two slits in it, you can measure where that photon strikes a screen a significant distance away on the other side. If you start adding up these photons, one-at-a-time, you’ll start to see a pattern emerge: an interference pattern. The same pattern that emerged when we had a continuous beam of light — where we assumed that many different photons were all interfering with one another — emerges when we shoot photons one-at-a-time through this apparatus. Somehow, the individual photons are interfering with themselves.

Normally, conversations proceed around this experiment by talking about the various experimental setups you can make to attempt to measure (or not measure) which slit the photon goes through, destroying or maintaining the interference pattern in the process. That discussion is a vital part of exploring the nature of the dual nature of quanta, as they behave as both waves and particles depending on how you interact with them. But we can do something else that’s equally fascinating: replace the photons in the experiment with massive particles of matter.

Your initial thought might go something along the lines of, “okay, well photons can act as both waves and particles, but that’s because photons are massless quanta of radiation. They have a wavelength, which explains the wave-like behavior, but they also have a certain amount of energy that they carry, which explains the particle-like behavior.” And therefore, you might expect, that these matter particles would always act like particles, since they have mass, they carry energy, and, well, they’re literally defined as particles!

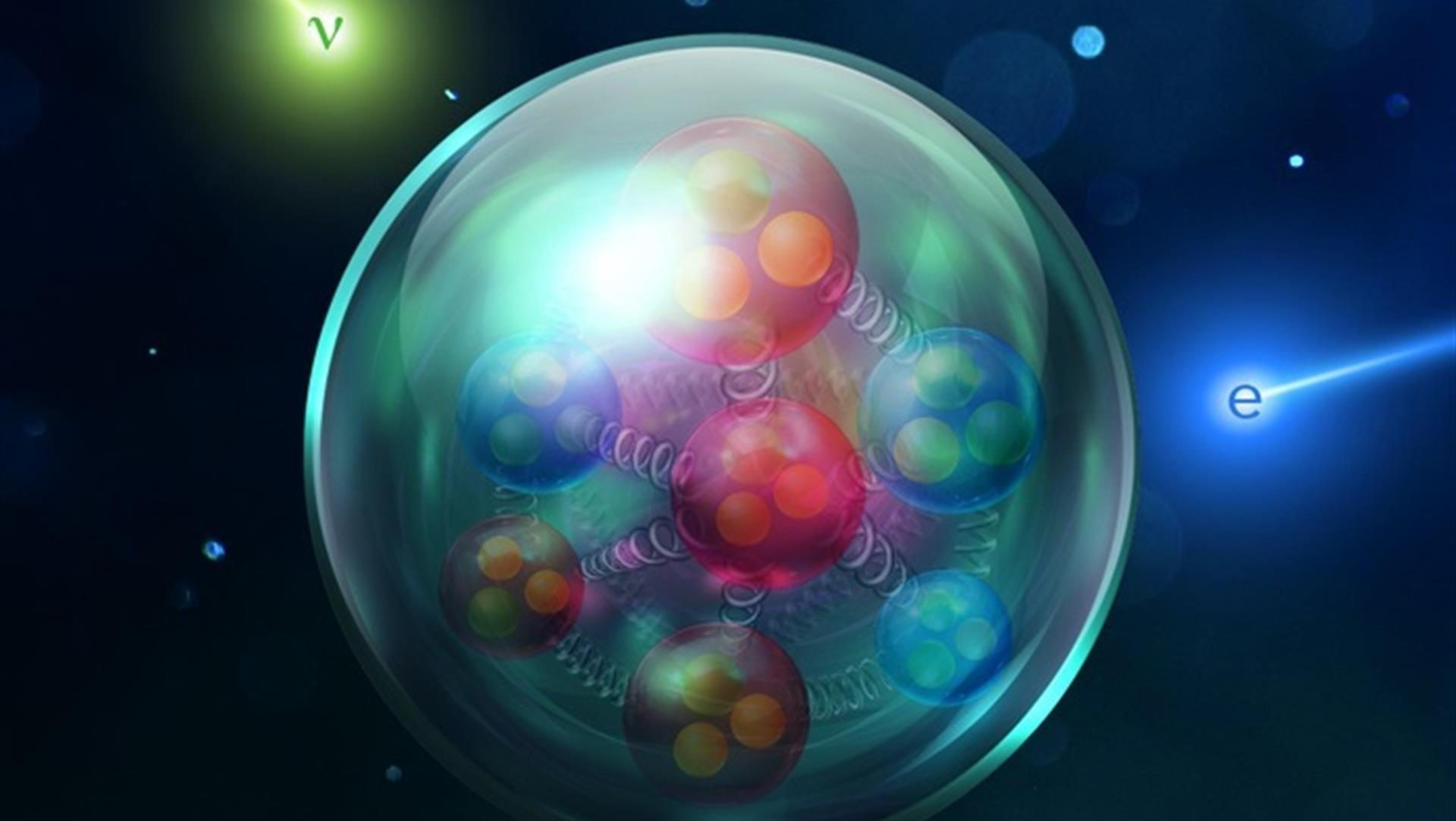

But in the early 1920s, physicist Louis de Broglie had a different idea. For photons, he noted, each quantum has an energy and a momentum, which are related to Planck’s constant, the speed of light, and the frequency and wavelength of each photon. Each quantum of matter also has an energy and a momentum, and also experiences the same values of Planck’s constant and the speed of light. By rearranging terms in the exact same way as they’d be written down for photons, de Broglie was able to define a wavelength for both photons and matter particles: the wavelength is simply Planck’s constant divided by the particle’s momentum.

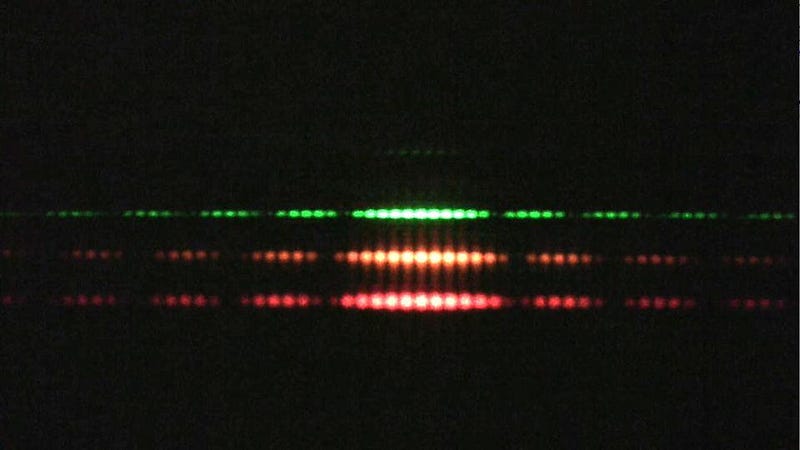

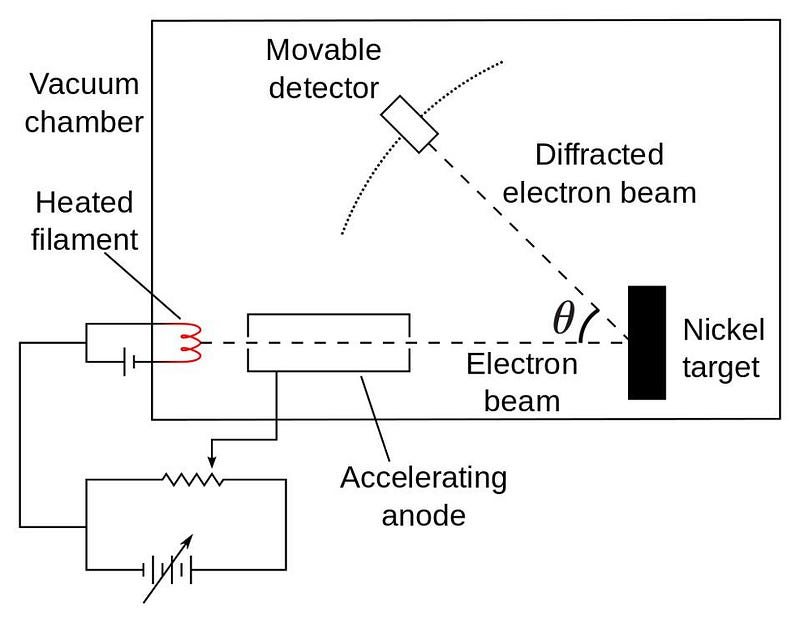

Mathematical definitions are nice, of course, but the real test of physical ideas always comes from experiments and observations: you have to compare your predictions with actual tests of the Universe itself. In 1927, Clinton Davisson and Lester Germer fired electrons at a target that produced diffraction for photons, and the same diffraction pattern resulted. Contemporaneously. George Paget fired electrons at thin metal foils, also producing diffraction patterns. Somehow, the electrons themselves, definitively matter particles, were also behaving as waves.

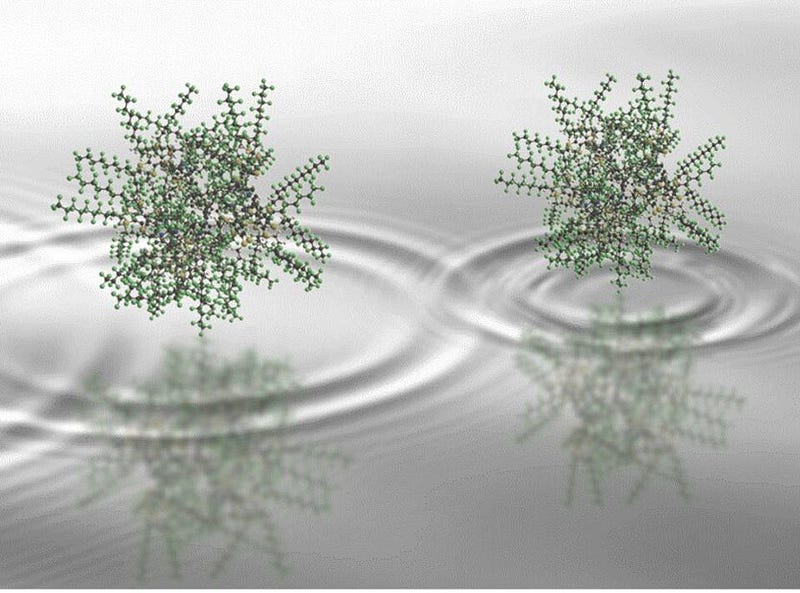

Subsequent experiments have revealed this wave-like behavior for many different forms of matter, including forms that are significantly more complicated than the point-like electron. Composite particles, like protons and neutrons, display this wave-like behavior as well. Neutral atoms, which can be cooled down to nanokelvin temperatures, have demonstrated de Broglie wavelengths that are larger than a micron: some ten thousand times larger than the atom itself. Even molecules with as many as 2000 atoms have been demonstrated to display wave-like properties.

Under most circumstances, the momentum of a typical particle (or system of particles) is sufficiently large that the effective wavelength associated with it is far too small to measure. A dust particle moving at just 1 millimeter per second has a wavelength that’s around 10^-21 meters: about 100 times smaller than the smallest scales humanity’s ever probed at the Large Hadron Collider.

For an adult human being moving at the same speed, our wavelength is a minuscule 10^-32 meters, or just a few hundred times larger than the Planck scale: the length scale at which physics ceases to make sense. Yet even with an enormous, macroscopic mass — and some 10²⁸ atoms making up a full-grown human — the quantum wavelength associated with a fully formed human is large enough to have physical meaning. In fact, for most real particles, only two things determine your wavelength:

- your rest mass,

- and how fast you’re moving.

In general, that means there are two things you can do to coax matter particles into behaving as waves. One is that you can reduce the mass of the particles to as small a value as possible, as lower-mass particles will have larger de Broglie wavelengths, and hence larger-scale (and easier to observe) quantum behaviors. But another thing you can do is reduce the speed of the particles you’re dealing with. Slower speeds, which are achieved at lower temperatures, translate into smaller values of momentum, which means larger de Broglie wavelengths and, again, larger-scale quantum behaviors.

This property of matter opens up a fascinating new area of feasible technology: atomic optics. Whereas most of the imaging we conduct is strictly done with optics — i.e., light — we can use slow-moving atomic beams to observe nanoscale structures without disrupting them in the ways that high-energy photons would. As of 2020, there is an entire sub-field of condensed matter physics devoted to ultracold atoms and the study and application of their wave behavior.

There are many pursuits in science that seem so esoteric that most of us have a hard time envisioning how they’d ever become useful. In today’s world, many fundamental endeavors — for new highs in particle energies; for new depths in astrophysics; for new lows in temperature — seem like purely intellectual exercises. And yet, many technological breakthroughs that we take for granted today were unforeseeable by those who laid the scientific foundations.

Heinrich Hertz, who created and sent radio waves for the first time, thought he was merely confirming Maxwell’s electromagnetic theory. Einstein never imagined that relativity could enable GPS systems. The founders of quantum mechanics never considered advances in computation or the invention of the transistor. But today, we’re absolutely certain that the closer we get to absolute zero, the more the entire field of atomic optics and nano-optics will advance. Perhaps, someday, we’ll even be able to measure quantum effects for entire human beings. Before you volunteer, though, you might be happier to put a cryogenically frozen human to the test instead!

Ethan Siegel is the author of Beyond the Galaxy and Treknology. You can pre-order his third book, currently in development: the Encyclopaedia Cosmologica.