Hyperdimensional computing discovered to help AI robots create memories

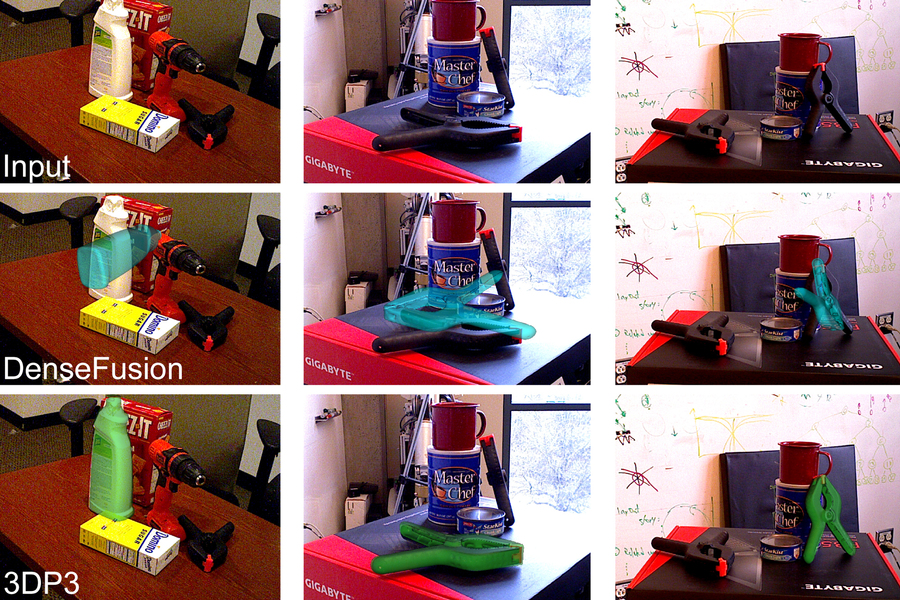

Credit: Perception and Robotics Group, University of Maryland.

- To become autonomous, robots need to perceive the world around them and move at the same time.

- Researchers create a theory of hyperdimensional computing to help store robot movement in high-dimensional vectors.

- This improvement in perception will allow artificial intelligences to create memories.

Do androids dream of electric sheep? Philip K. Dick famously wondered that in his stories that explored what it meant to be human and robot in the age of advanced and widespread artificial intelligence. We aren’t quite in “Blade Runner” reality just yet, but now a team of researchers came up with a new way for robots to remember that may close the gap between robots and us for good.

For robots to be as proficient as humans in various tasks, they need to coordinate sensory data with motor capabilities. Scientists from the University of Maryland published a paper in the journal Science Robotics describing a potentially revolutionary approach to improve how AI handles sensorimotor representation using hyperdimensional computing theory.

What the researchers set out to create was a way to improve a robot’s “active perception” – its ability to integrate how it perceives the world around it with how it moves in that world. As they wrote in their paper, “we find that action and perception are often kept in separated spaces,” which they attribute to traditional thinking.

They proposed instead “a method of encoding actions and perceptions together into a single space that is meaningful, semantically informed, and consistent by using hyperdimensional binary vectors (HBVs). “

As their press release explains, HBVs work in very high-dimensional spaces, containing a plethora of information about different discrete items like an image or a sound or a command. These can be further grouped into sequences of discrete items and groupings of items and sequences.

By utilizing these vectors, the researchers look to keep all sensory information the robot receives in one place, essentially creating its memories. As more information gets stored, “history” vectors would be created, increasing the robot’s memory content.

The scientists think that active perception and memories would make the robots better at autonomous decisions, expecting future situations and completing tasks.

This “pipeline” describes how data from a drone flight is recorded and translated into binary vectors that are integrated into memory through vector operations. This memory can then be recalled.

Credit: Perception and Robotics Group, University of Maryland.

The Hyperdimensional “pipeline”

“An active perceiver knows why it wishes to sense, then chooses what to perceive, and determines how, when and where to achieve the perception,” said Aloimonos. “It selects and fixates on scenes, moments in time, and episodes. Then it aligns its mechanisms, sensors, and other components to act on what it wants to see, and selects viewpoints from which to best capture what it intends. Our hyperdimensional framework can address each of these goals.”

Outside of robots, the scientists also see an application of their theories in deep learning AI methods employed in data mining and visual recognition.

To test the theory, the team employed a dynamic vision sensor (DVS) which continually captures the edges of objects in event clouds as they move by. By quickly focusing on the contours of the scene and the movement, this sensor is well-suited for autonomous navigation of robots. The data from the event clouds is stored in binary vectors, allowing the scientists to apply hyperdimensional computing.

Here’s a video of how DVS works:

The research was carried out by the computer science Ph.D. students Anton Mitrokhin and Peter Sutor, Jr., along with Cornelia Fermüller, an associate research scientist with the University of Maryland Institute for Advanced Computer Studies, as well as the computer science professor Yiannis Aloimonos. He advised Mitrokhin and Sutor.

Check out their paper “Learning sensorimotor control with neuromorphic sensors: Toward hyperdimensional active perception” in Science Robotics.