Can the “Lesbian Rule” Fight Weaponized Math?

1. It’s time to bring back the “Lesbian Rule.” Aristotle wrote about it, in Shakespeare’s day it was proverbial, and today its lessons apply to what Cathy O’Neil calls “Weapons of Math Destruction.”

2. O’Neil exposes software models as digital deciders that can be “opaque… and uncontestable (sic), even when they’re wrong” (a new kind of kinetic logic threat).

3. Aristotle believed laws can be “defective because of… generality.” So equity can “only be measured… like the leaden rule used by Lesbian builders… that rule is not rigid but can bend to the shape of the stone.” (Until ~1870 Lesbian didn’t mean female homosexual.)

4. For Aristotle equity outweighed generalized justice, so good judges, like Lesbian builders, bend universal rules “to fit the circumstances.”

5. Shakespeare pondered distinctions between justice, equity, and equality. See “The Lesbian Rule of Measure for Measure’s” inflexible rules, King Lear’s “social arithmetics,” or “false equality” and erring quantitative equations in The Tempest.

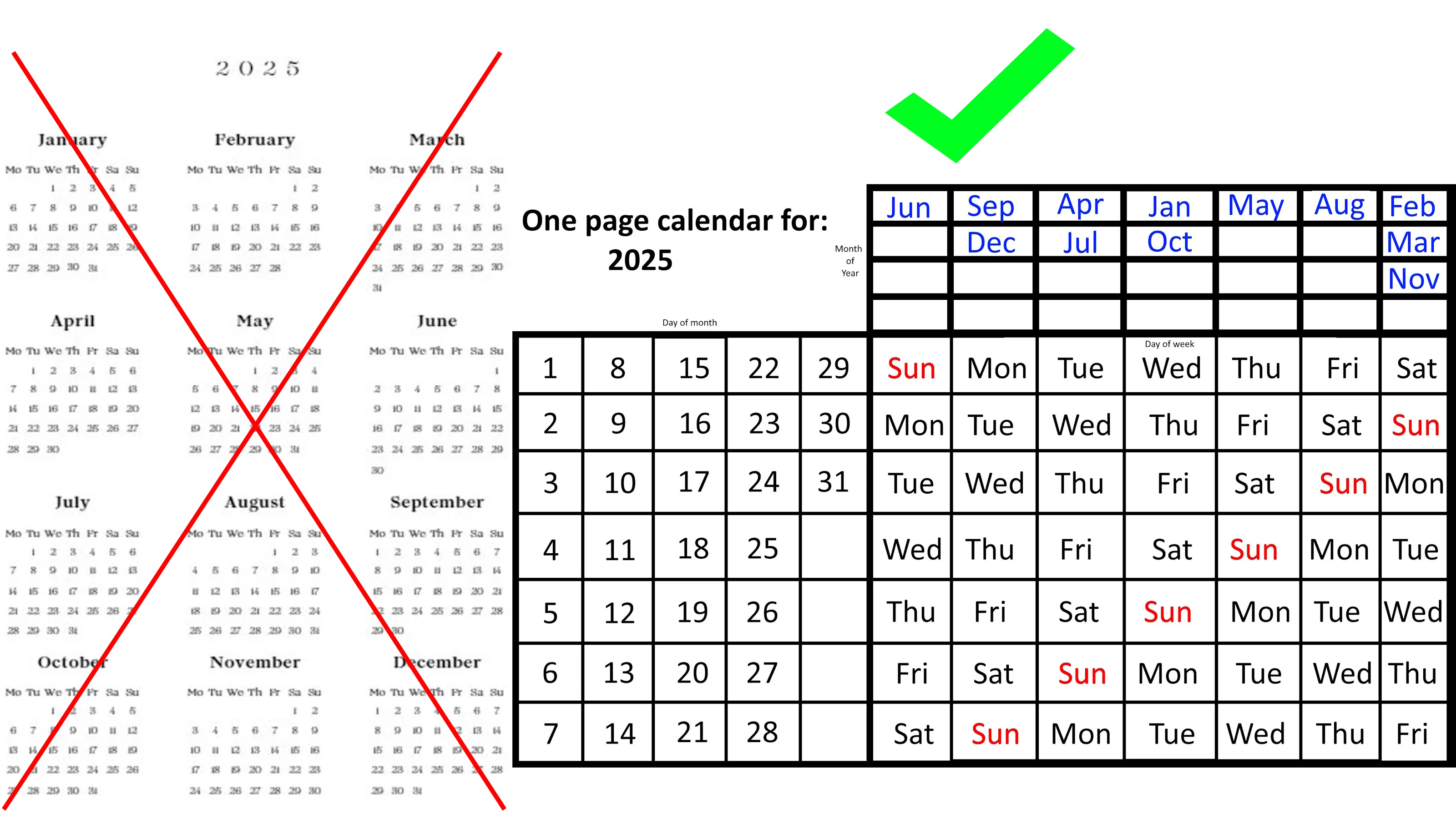

6. In our math-intoxicated times we’re often at the mercy of the rigid-ruled robo-judgments of algorithms. O’Neil details their math-driven harms across fields like finance, education, justice, and democracy.

7. Models and metrics seemingly offer objective and fair judgements, but they often encode “prejudice, misunderstanding, and bias.” And predictive models can perpetuate injustice (e.g., algorithmic discrimination in sentencing and recidivism models).

8. And metrics can distort—>what gets measured often gets gamed. For instance, one university instantly improved its research rating by paying adjuncts $72,000 for 3 weeks of teaching if they reassigned old research to their new university.

9. Plus, much resists quantification. For instance, hammering all the complexity of good teaching into one number risks being “a statistical farce” (e.g. this New York Public School teacher rating seesawed wildly).

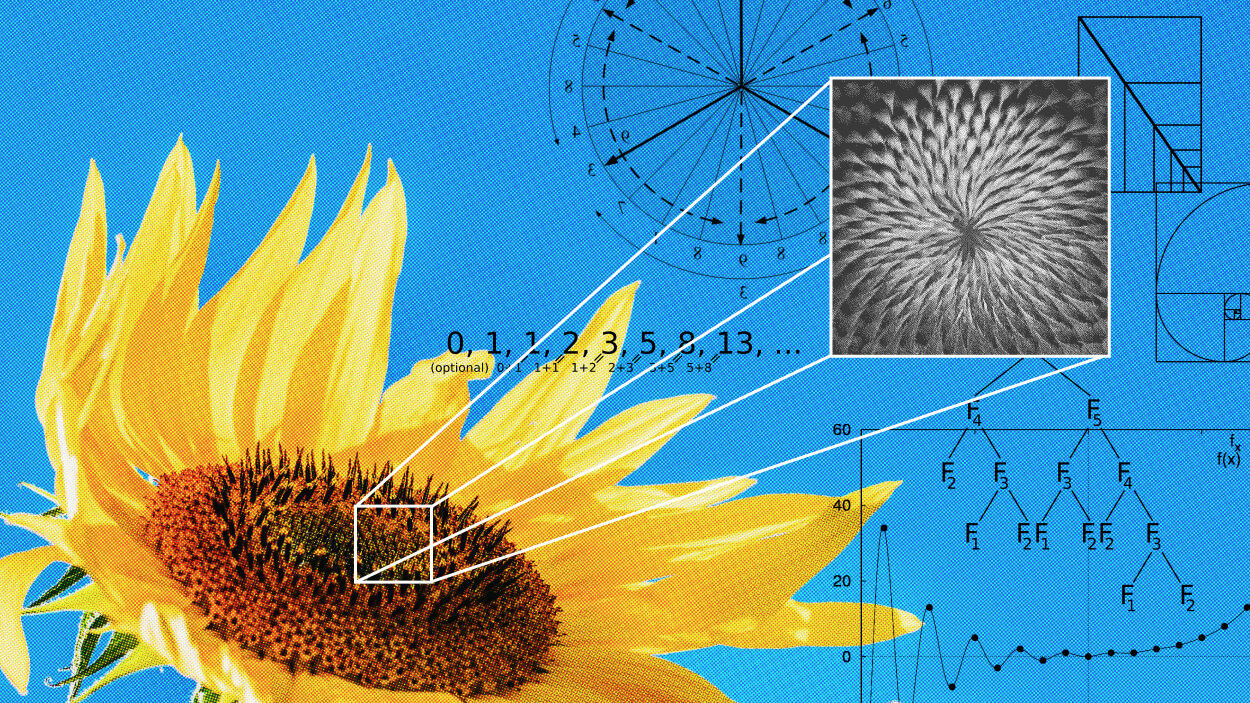

11. Sometimes the smart-seeming move of focussing on the metrics and the math, misleads. What math-mesmerized experts forget is that not all logic works like math (here’s an example of locally valid steps not logically accumulating like math overall).

12. Avoiding low-quality quantification, or data-driven dumbness, requires non-numeric logic and circumstance-fitting metaphor (see how data is like poetry).

13. Lesbian Rule thinking now means asking: Is all the needed truth in the data? Are the relevant realities squeezed into the model’s rules? Biases countered? Exceptions handled? Redress enabled?

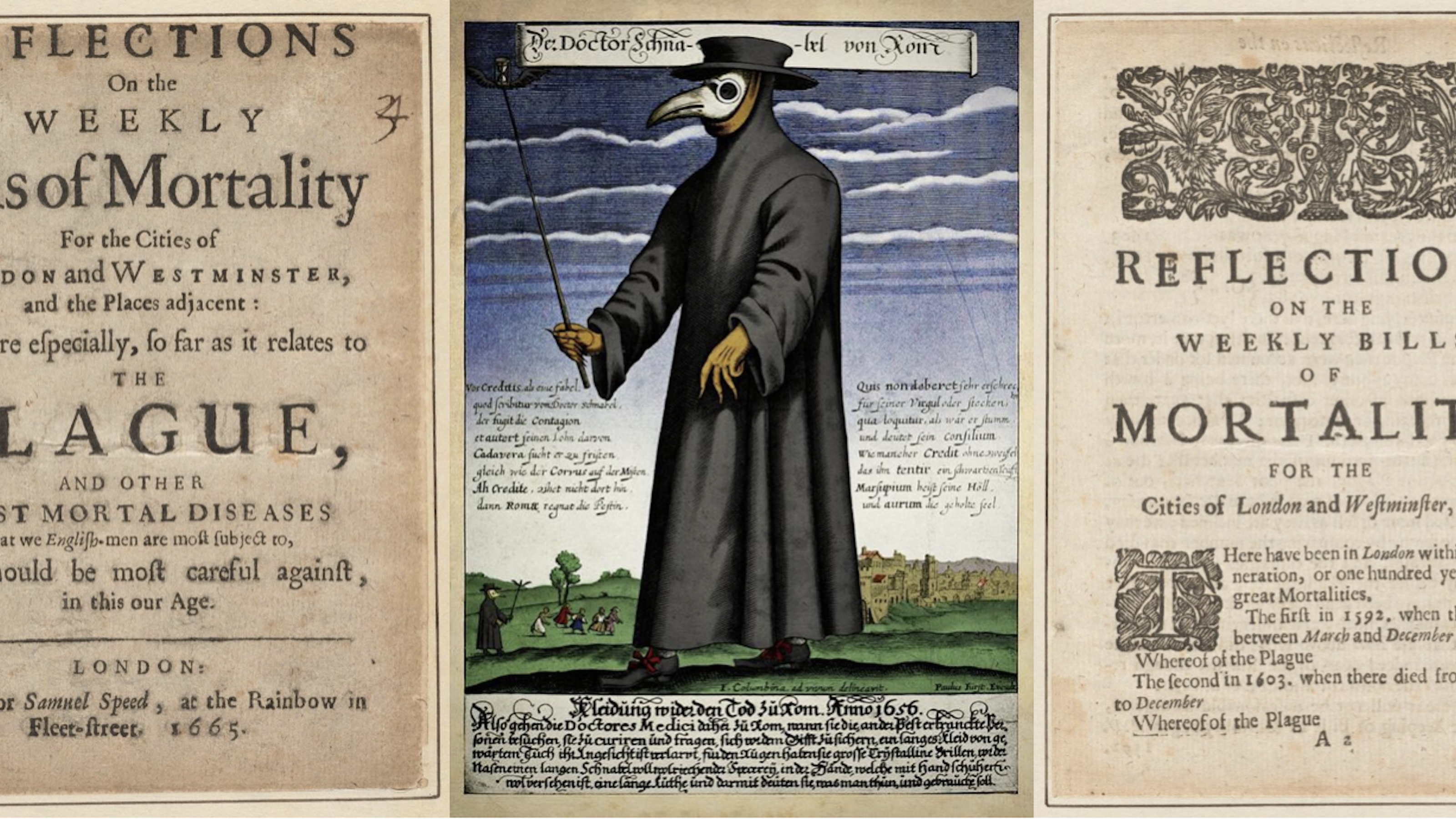

14. Per Aristotle, legal codes have long encoded the need for judges to tailor justice (situationally, equitably). Legal norms (mitigation, recourse, conflict-of-interest avoidance) provide good models for algo-ethics (aside: lawyers are among the few still trained in non-numeric logic).

15. Ethics are expensive. Since they still need humans, and we don’t scale like silicon deciders. But we can’t count on markets “to right these wrongs” (see Obama on cherry-picking business models).

16. O’Neil says putting “fairness ahead of profit” means explicitly embedding “better values into our algorithms” (+using audits, transparency, hippocratic oaths).

17. Algorithms offer great gains and efficiencies, but these rigid-ruled robo-judges are also clear and present dangers. We court disaster if justice remains blind to their systemic risks.

—

Illustration by Julia Suits, The New Yorker cartoonist & author of The Extraordinary Catalog of Peculiar Inventions