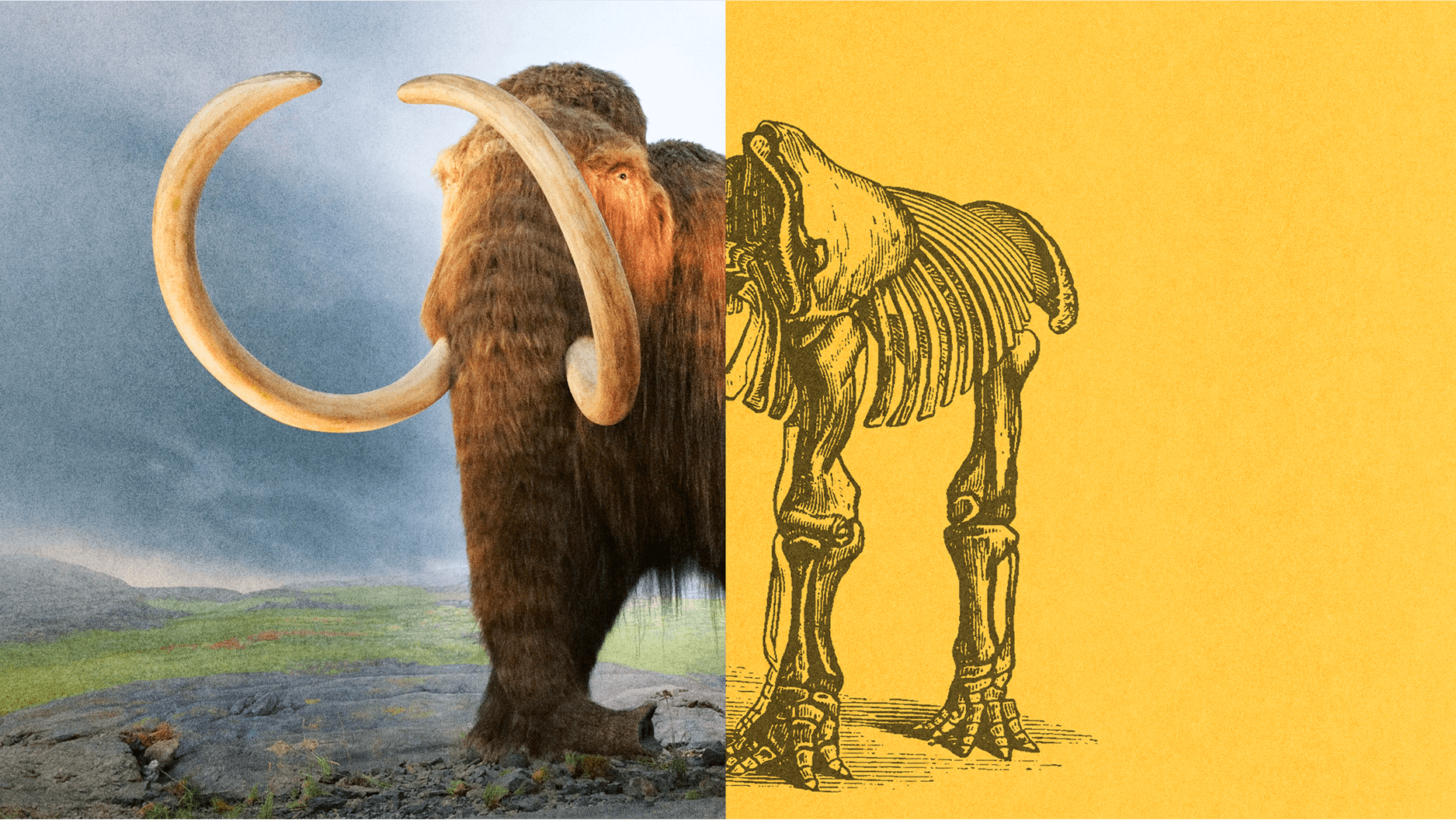

How do you tell reality from a deepfake?

ROB LEVER/AFP via Getty Images

When Donald Trump belatedly acknowledged defeat two months after last year’s US presidential election, some news reports zeroed in on a fundamental question: whether his speech had actually happened at all.

The dramatic proliferation of deepfakes – online imagery that can make anybody appear to do or say anything within the limits of one’s imagination, cruelty, or cunning – has begun to undermine faith in our ability to discern reality.

Recent advances in the technology have shocked even seasoned technology observers, and made anyone with a phone and access to an app like Avatarify capable of an adequate version.

According to one startup’s estimate, the number of deepfake videos online jumped from 14,678 in 2019 to 145,277 by June of the following year. Last month, the FBI warned that “malicious actors” will likely deploy deepfakes in the US for foreign influence operations and criminal activity in the near future. Around the world, there are concerns the technology will increasingly become a source of disinformation, division, fraud and extortion.

When Myanmar’s ruling junta recently posted a video of someone incriminating the country’s detained civilian leader, it was widely dismissed as a deepfake. Last year, the Belgian prime minister’s remarks linking COVID-19 to climate change turned out to be a deepfake, and Indian politician Manoj Tiwari’s use of the technology for campaigning caused alarm. In Gabon, belief that a video of the country’s ailing president was a deepfake triggered a national crisis in early 2019.

Manipulating images to alter public perception for political reasons dates back at least as far as Stalin – who famously deleted purged comrades from official photos.

However, some say a more pressing issue is the increased vulnerability of non-public figures to online assault. Indian journalist Rana Ayyub has detailed attempts to silence her using deepfake pornography, for example.

Some argue the threat of the technology itself is overhyped – and that the real problem is that bad actors can now dismiss video evidence of wrongdoing by crying “deepfake” in the same way they might dismiss media reports that they dislike as “fake news.”

Still, according to one report, technically-sophisticated, “tailored” deepfakes present a significant threat; these may be held in reserve for a key moment, like an election, to maximize impact. As of 2020 the estimated cost for the technology necessary to churn out a “state-of-the-art” deepfake was less than $30,000, according to the report.

The popularizing of the term “deepfakes” had a sordid origin in 2018. Calls to regulate or ban them have grown since then; related legislation has been proposed in the US, and in 2019 China made it a criminal offense to publish a deepfake without disclosure. Facebook said last year it would ban deepfakes that aren’t parody or satire, and Twitter said it would ban deepfakes likely to cause harm.

It’s been suggested that the best way to inoculate people against the danger of deepfakes is through exposure. A variety of efforts have been made to help the public understand what’s at stake.

Last year, the creators of the popular American cartoon series “South Park” posted the viral video “Sassy Justice,” which features deepfaked versions of Trump and Mark Zuckerberg. They explained in an interview that anxiety about deepfakes may have taken a back seat to pandemic-related fears, but the topic nonetheless merits demystifying.

For more context, here are links to further reading from the World Economic Forum’s Strategic Intelligence platform:

- A growing awareness of deepfakes meant people were quickly able to spot bogus online profiles of “Amazon employees” bashing unions, according to this report – though a hyper-awareness of the technology could also lead people to stop believing in real media. (MIT Technology Review)

- The systems designed to help us detect deepfakes can be deceived, according to a recently-published study – by inserting “adversarial examples” into every video frame and tripping up machine learning models. (Science Daily)

- Authoritarian regimes can exploit cries of “deepfake.” According to this opinion piece, claims of deepfakery and video manipulation are increasingly being used by the powerful to claim plausible deniability when incriminating footage surfaces. (Wired)

- It’s easy to blame deepfakes for the proliferation of misinformation, but according to this opinion piece the technology is no more effective than more traditional means of lying creatively – like simply slapping a made-up quote onto someone’s image and sharing it. (NiemanLab)

- A recently-published study found that one in three Singaporeans aware of deepfakes believe they’ve circulated deepfake content on social media, which they later learned was part of a hoax. (Science Daily)

- “It really makes you feel powerless.” Deepfake pornography is ruining women’s lives, according to this report, though a legal solution may be forthcoming. (MIT Technology Review)

- “A propaganda Pandora’s box in the palm of every hand.” Deepfake efforts remain relatively easily detected, according to this piece – but soon the same effects that once required hundreds of technicians and millions of dollars will be possible with a mobile phone. (Australian Strategic Policy Institute)

On the Strategic Intelligence platform, you can find feeds of expert analysis related to Artificial Intelligence, Digital Identity and hundreds of additional topics. You’ll need to register to view.

Reprinted with permission of the World Economic Forum. Read the original article.